💡 In This Guide:

If you have noticed that your website content shows up in ChatGPT, Google's AI Overviews, or Perplexity, you are not imagining things. Large Language Models (LLMs) are now a major source of traffic and visibility. But ranking in these AI systems is different from traditional SEO. This guide walks you through exactly how to get better results and higher rankings in LLMs using straightforward, human-friendly language. You will learn what LLM ranking actually means, how these models choose answers, and what you can do today to make your content more visible.

What "Ranking in LLMs" Actually Means

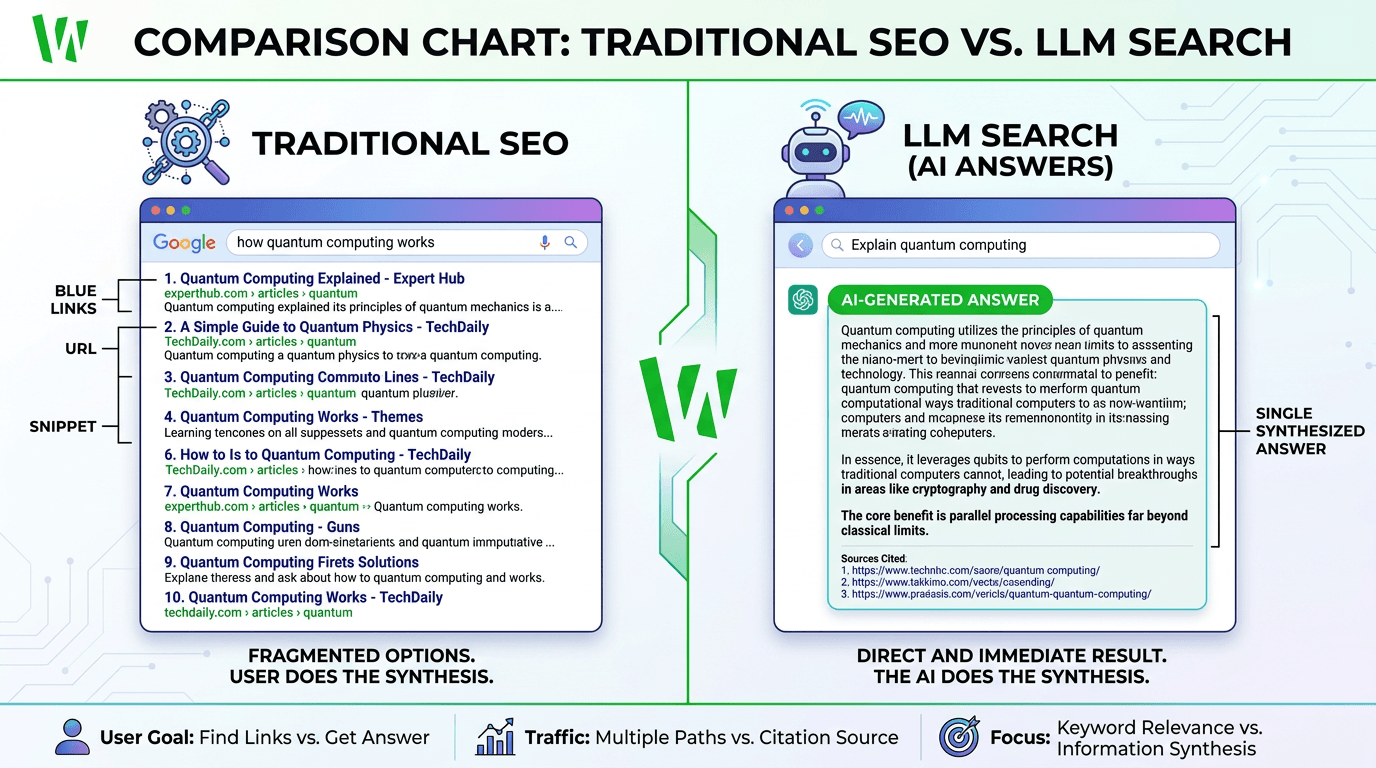

When someone types a question into Google, the search engine looks for web pages that match keywords, backlinks, and domain authority. Then it ranks those pages from first to tenth. That is traditional search.

When someone asks ChatGPT or Google Gemini a question, the LLM does not return a list of links. It generates one single answer based on everything it has learned from training data and real-time retrieval. Ranking in LLMs means your content is selected, summarized, or cited by the model when generating a response. This is sometimes called Generative Engine Optimization (GEO).

| Traditional SEO | LLM Optimization (GEO) |

|---|---|

| Keywords and backlinks | Entities and context |

| Page-level ranking | Whole-site authority |

| Click-through rate matters | Reference rate matters |

| 10 blue links returned | One generated answer returned |

| User clicks on your site | AI summarizes your content |

How LLMs Retrieve and Generate Answers

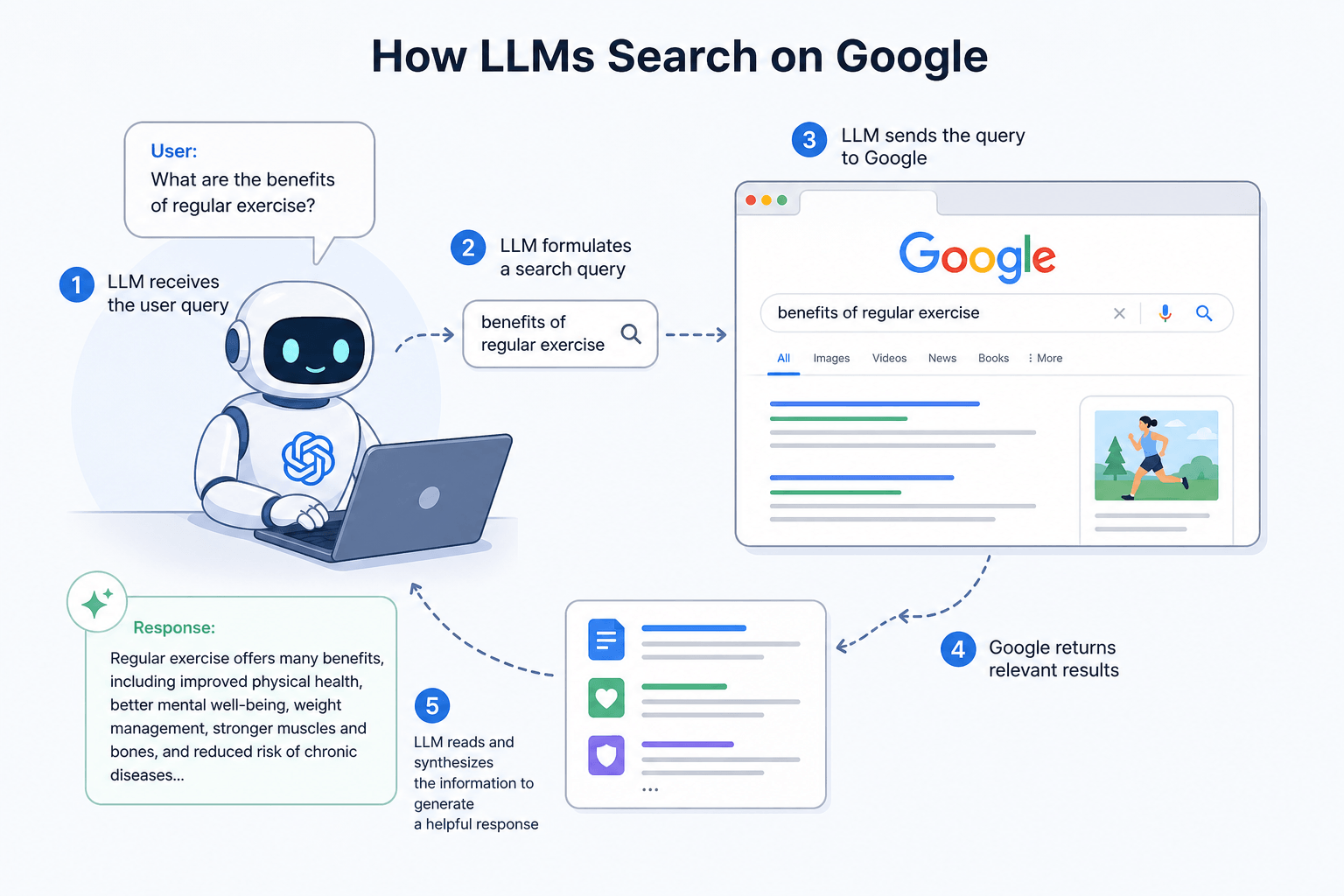

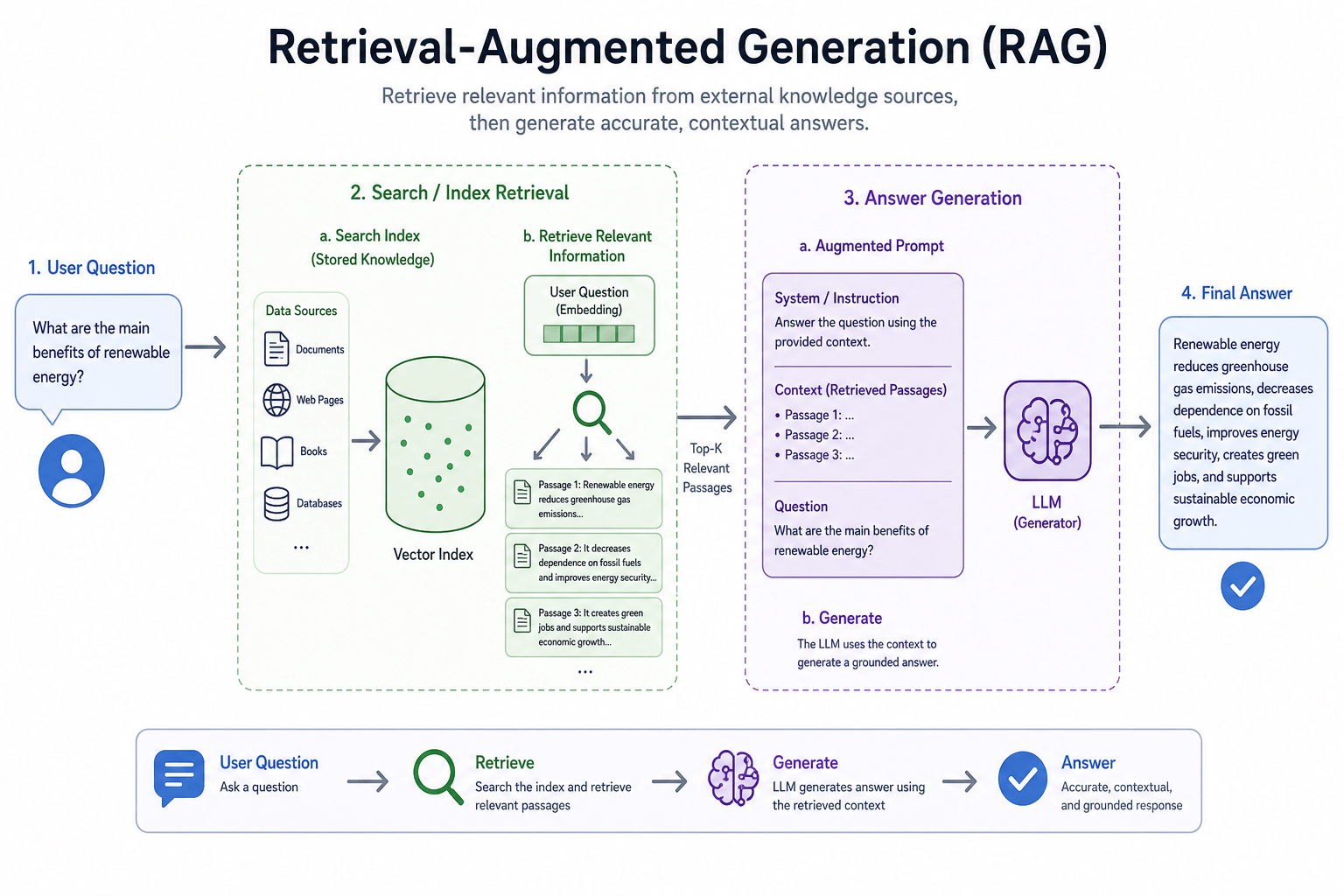

Most modern LLMs use a process called Retrieval-Augmented Generation (RAG). First, the model searches through a large index of documents, websites, and databases. Then it retrieves the most relevant pieces of information. Finally, it generates a natural language answer using those retrieved pieces.

Think of it like a smart librarian. The librarian looks through many books, picks the most useful paragraphs, and then rewrites them into a single helpful answer for you. The context window — typically between 8,000 and 128,000 tokens — determines how much surrounding text the model can consider at once.

What LLMs Look for During Retrieval

- Clear and direct answers to specific questions

- Content that matches the search intent behind the query, not just the keywords

- Information from sources that appear trustworthy and consistent

- Fresh content when the question is about recent events

- Well-structured formatting that separates definitions, steps, and examples

Key Ranking Factors in LLMs

Based on recent research from AI researchers and SEO case studies, here are the most important ranking signals that influence whether an LLM uses your content.

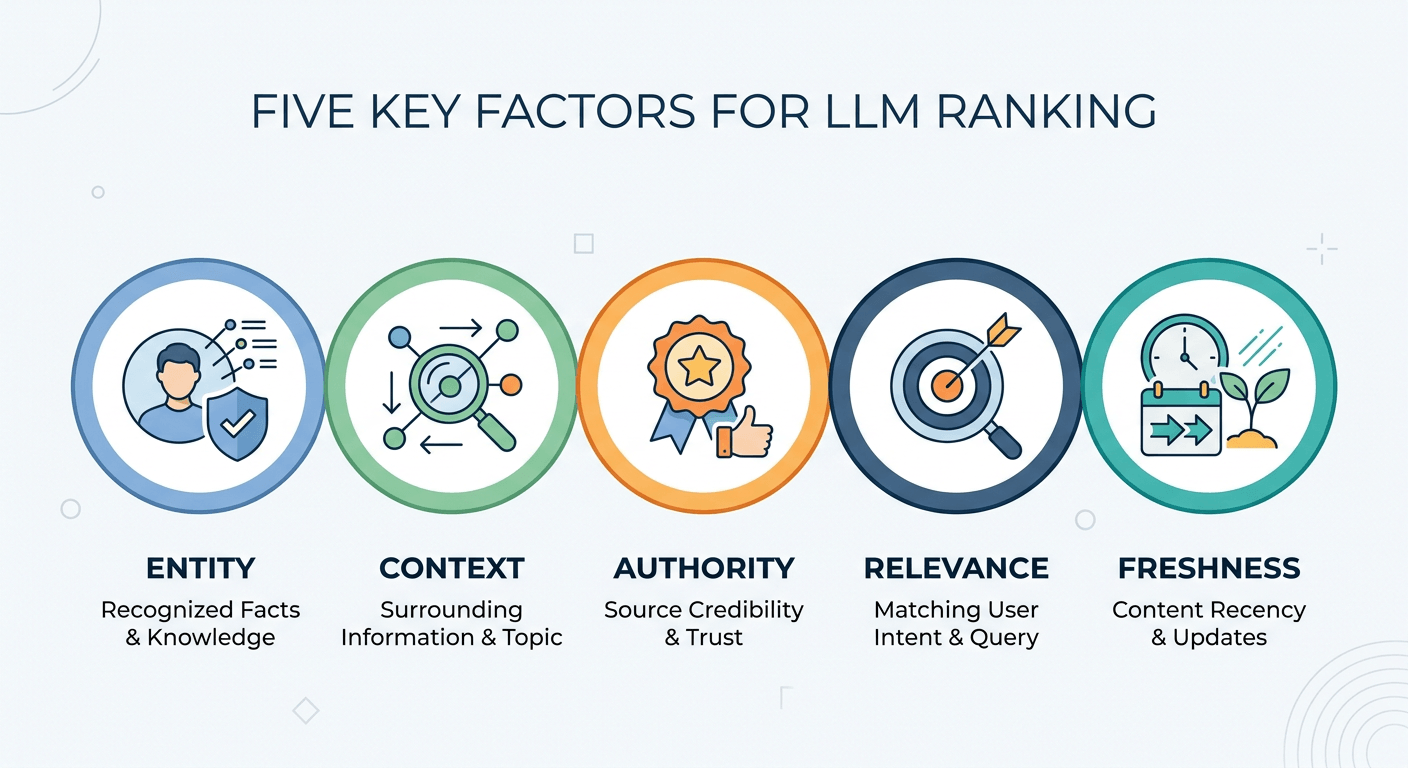

Entities

An entity is a specific person, place, thing, or concept with a clear identity. "Eiffel Tower" is an entity. "That big tower in Paris" is not. LLMs prefer content that names entities clearly, connects them to related entities, and maintains high entity salience throughout the page.

Context

If you write about "Apple," does the model know you mean the fruit or the technology company? Surrounding words provide context. Good content builds context before introducing potentially confusing terms, improving contextual completeness across the page.

Authority

LLMs learn from training data which sources are frequently cited and trusted. Author authority should be established through bios, credentials, and brand presence. If major publications reference your site, you appear more authoritative. Content with false information trains the model to avoid you.

Relevance

Your content must directly answer the question people are asking. Content-query alignment should be an exact match with user intent. Vague or off-topic content gets filtered out immediately.

Freshness

For news, product releases, or current events, LLMs prioritize recent content. Update older pages that still attract queries every 3 to 6 months as part of a healthy content lifecycle management strategy.

Google SEO vs LLM Optimization: Key Differences

Many people assume that good Google rankings automatically mean good LLM visibility. That is not always true. Google cares about satisfying a user's click. LLMs care about satisfying a user's question without making the user click anywhere.

| Priority | Google SEO | LLM Optimization |

|---|---|---|

| Primary signal | Keywords in title, headings, body | Entity connections across the site |

| Link strategy | Backlinks from relevant sites | Strong internal entity linking |

| Technical focus | Page speed and mobile friendliness | Structured data and schema markup |

| Content goal | Earn the click | Answer completely without a click |

| Tone requirement | Engaging for humans | Conversational yet professional |

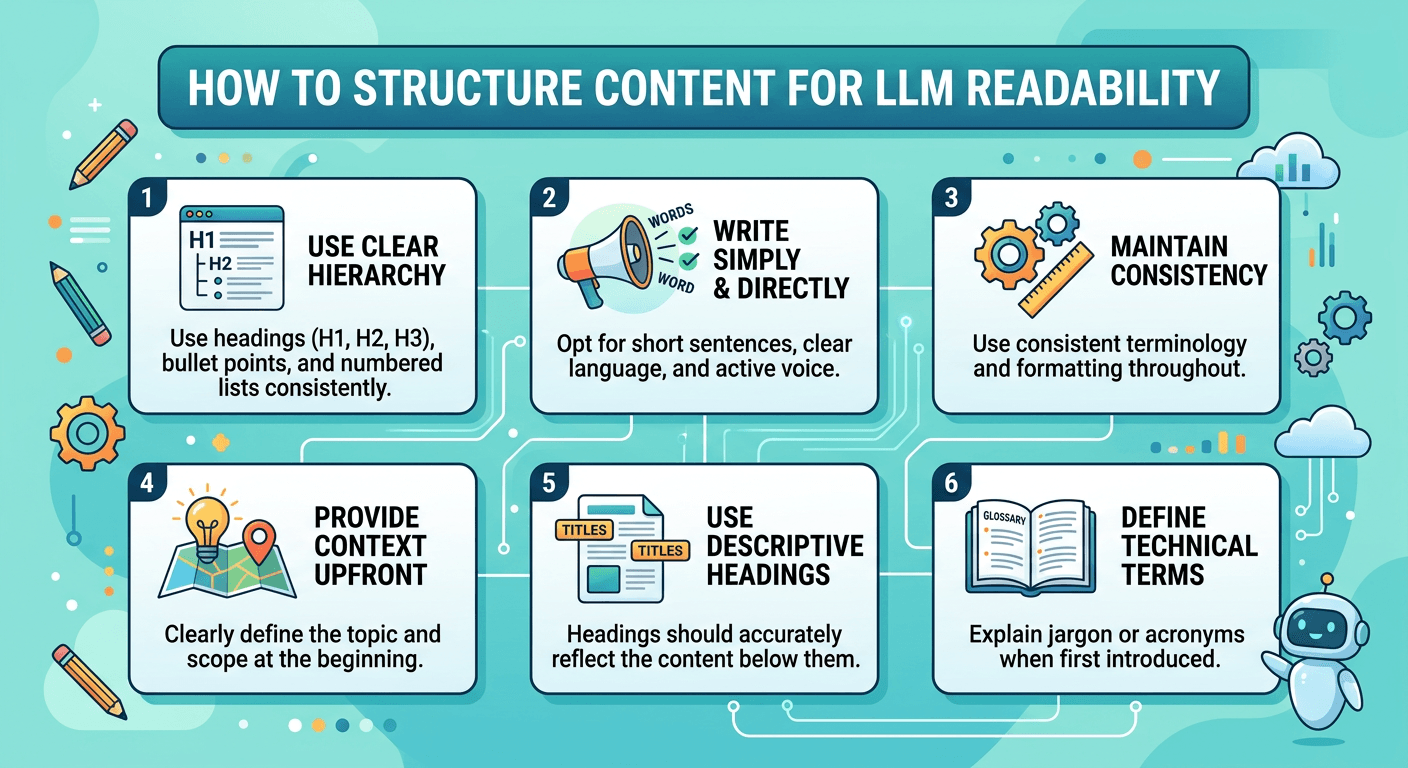

How to Structure Content for LLM Readability

LLMs work like fast readers who skim for clear signals. If your content is hard to scan, the model will move on to another source. The readability score should target grade 6 to 8 for most audiences.

Best Structure for LLM-Friendly Content

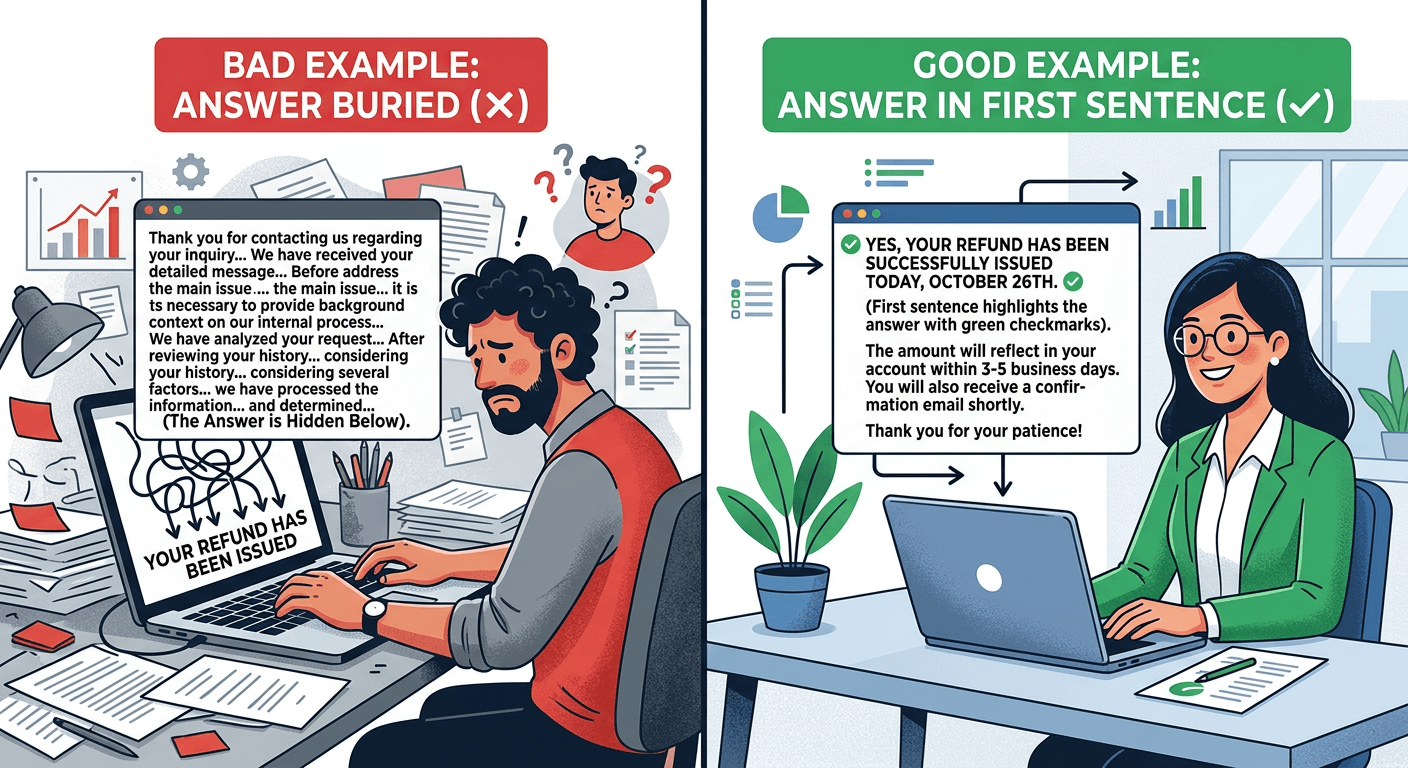

- Place your main answer in the first 100 words. Do not bury it. Put the direct answer up front.

- Use H2 headings to ask or answer a specific question. Each heading should be a clear topic signal.

- Keep paragraphs to 3 to 4 sentences maximum. Long paragraphs look like walls of text to an LLM.

- Use bullet points for lists. Use numbered lists for steps. This makes extraction much easier.

- Write definitions for key terms before using them. Definition clarity is critical for technical content.

- Maintain logical flow from general to specific. This helps the model follow your argument coherently.

Well-Formatted Definition Pattern

Tokenization is the process of breaking text into smaller pieces called tokens. When you understand tokenization, you can write clearer content that LLMs process more efficiently. Define terms first, then use them — never the reverse.

Entity-Based SEO: Build and Connect Entities Properly

Entities are the backbone of LLM understanding. If your content does not clearly identify and connect entities, the model cannot categorize you as an authority on any topic.

How to Build Entities Correctly

- Create a page for each important entity on your website. For a bakery, that means pages for "sourdough bread," "croissant recipe," and "baking temperature guide."

- Link between related entity pages. A sourdough bread page should link to the baking temperature guide. This tells the LLM these concepts belong together.

- Use the exact name of the entity. Do not say "the famous Paris tower." Say "Eiffel Tower." This ensures disambiguation clarity with no confusion between meanings.

- Add entity attributes. For "coffee," mention its types, origins, roasting levels, and caffeine content. The more attributes you connect, the better the model understands it.

- Align with knowledge graphs. Match your entity definitions to established databases like Wikidata or Google's Knowledge Graph for maximum compatibility.

Topical Authority and Content Depth for LLMs

Topical authority means your website is the go-to source for a specific subject. LLMs recognize topical authority when you publish many detailed pages about related subtopics organized using topic clusters.

How to Build Topical Authority

- Pick one main topic for your website, like "home gardening."

- Write at least 20 to 30 pages covering every subtopic: soil types, watering schedules, pest control, seasonal planting, tool reviews, and so on.

- Link all these pages together in a logical way with one hub page that lists every subtopic.

- Update your content regularly to maintain freshness signals — every 3 to 6 months minimum.

- Avoid writing one perfect page and never touching the topic again. LLMs prefer sites with breadth and depth.

How to Optimize for Answer Extraction

Answer extraction is when an LLM pulls a sentence or paragraph directly from your page and uses it in the generated response. This is the closest thing to a "ranking" in LLMs. To maximize answer extraction:

- Write direct answers to common questions in plain language. Start the sentence with the answer, not with fluff or preamble.

- Use question and answer format. Write the full question as an H2 or H3. Write the answer immediately below.

- Keep simple answers to 40 to 60 words. For complex questions, use 100 to 150 words with bullet points.

- Never start answers with "It depends" or "That is a great question." LLMs value directness above all else.

- Break processes into numbered steps. Step-by-step explanations are critical for how-to queries.

The Difference That Gets You Cited

Bad example: "There are several factors to consider when baking bread, including temperature and humidity."

Good example: "The ideal bread baking temperature is 375 degrees Fahrenheit for most standard bread recipes."

Writing for AI: Clear, Direct, Context-Rich Techniques

Writing for AI does not mean writing robotically. It means writing clearly so that a machine can understand your meaning and a human still enjoys reading it. The conversational tone level should feel natural, not forced.

Techniques for Clear, AI-Friendly Writing

- Use active voice. "The dog chased the ball" instead of "The ball was chased by the dog."

- Remove unnecessary adjectives and adverbs. "Very important" becomes "critical." "Really big" becomes "massive."

- Define acronyms on first use. Do not assume the LLM knows what "Natural Language Processing (NLP)" means without spelling it out.

- Avoid ambiguous pronouns. Instead of "They said it was good," write "The researchers confirmed the test was successful."

- Write in a neutral or positive tone. LLMs trained on toxic or overly negative content may deprioritize your site as low quality.

- One simple test: Read your sentence aloud. If you stumble or need to reread it, rewrite it.

Semantic SEO, NLP, and Contextual Relevance

Semantic SEO means optimizing for meaning instead of just keywords. Natural Language Processing (NLP) is how AI understands the meaning behind your content.

If your main topic is "coffee brewing," do not just repeat the phrase "coffee brewing" 50 times. Instead, write about coffee grounds, water temperature, brew time, filter types, bean freshness, and grinding methods. A page about "how to fix a leaky faucet" that never mentions "washer" or "O-ring" will look incomplete to an LLM — this is a content gap in your semantic coverage depth.

Free NLP Tools to Try

Google NLP API Demo

Reveals the entities and concepts your content currently communicates. Shows entity relevance scores for each term detected in your text.

TextRazor

Analyzes your content for entities, relations, and topics. Identifies which concepts are missing from your semantic coverage.

MeaningCloud

Provides topic detection, entity extraction, and sentiment analysis. Useful for auditing whether your content matches the expected context for a query.

How Internal Linking Improves LLM Understanding

Internal links are the roads that connect your content. Without them, LLMs see isolated pages. With them, LLMs see a complete website about a topic.

Best Internal Linking Practices for LLMs

- Link from general pages to specific pages. A page about "baking" should link to your "bread recipes" and "cookie recipes."

- Use descriptive anchor text. Instead of "click here," write "learn more about sourdough fermentation."

- Link between related entities. A page about "coffee brewing methods" should link to "best coffee beans for espresso."

- Create topic clusters. One pillar page covers the broad topic. Ten cluster pages cover specific subtopics. Every cluster page links back to the pillar page.

- Avoid orphan pages. Every page on your site should have at least one internal link pointing to it.

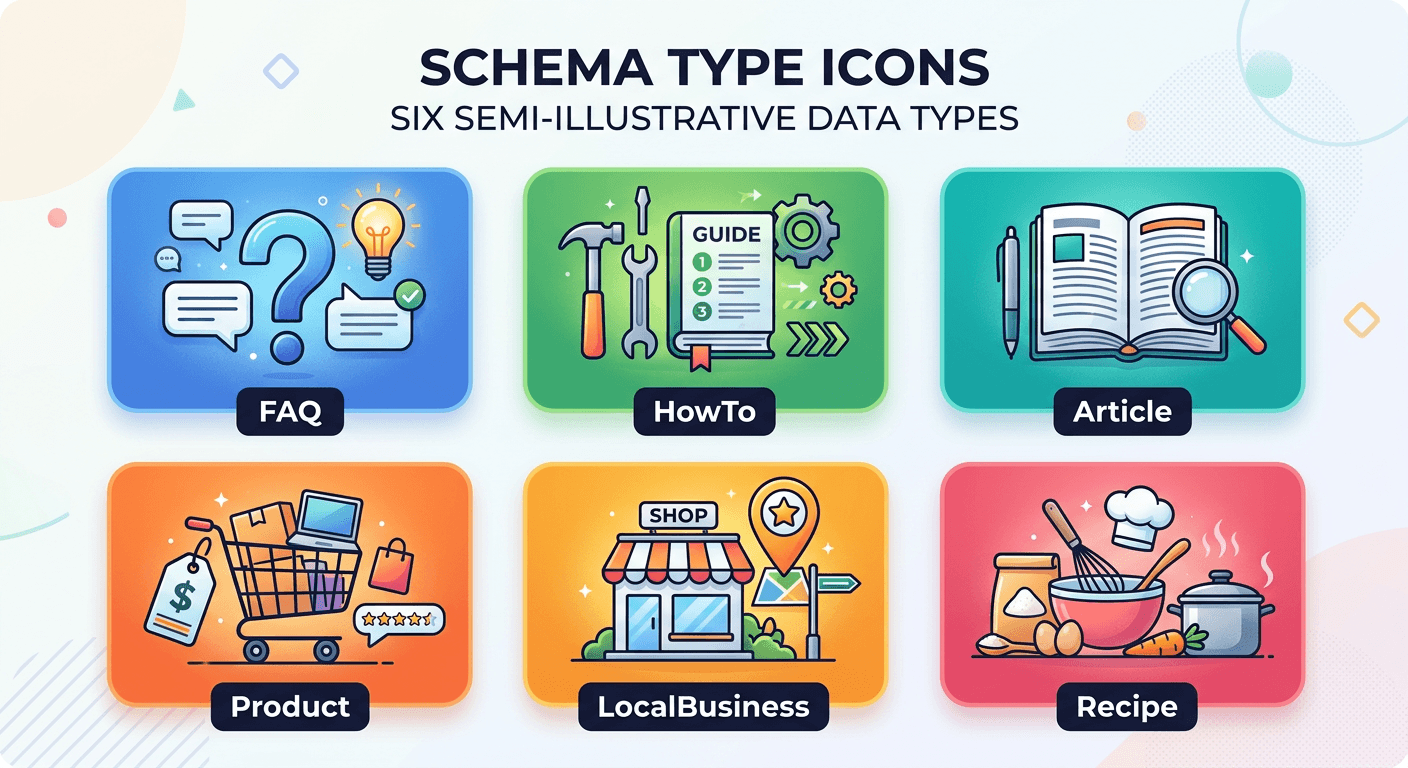

Structured Data and Schema for AI Interpretation

Structured data is code you add to your website that tells search engines and AI exactly what your content means. It is like giving the LLM a cheat sheet. Schema markup written in JSON-LD is the preferred format.

Most Valuable Schema Types for LLM Ranking

FAQ

For question and answer pairs. Directly maps your content to the Q&A format LLMs prefer for answer extraction.

HowTo

For step-by-step instructions. Makes numbered processes extractable as structured data instead of unformatted prose.

Article

For news and blog posts. Signals publication date, author credentials, and content category to the retrieval system.

Product

For e-commerce items. Includes price, availability, and reviews — all attributes LLMs use to answer product queries.

LocalBusiness

For physical locations. Includes address, hours, and phone — critical for local LLM queries like "best coffee shop near me."

Building Trust Signals (E-E-A-T) for LLM Recognition

E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) is used by Google. LLMs use similar logic. Content accuracy and fact-checking are critical components.

How to Demonstrate E-E-A-T to LLMs

- Publish author bios that show real credentials. "John has 10 years of experience as a plumber" is better than "John likes writing about plumbing."

- Cite trustworthy external sources. Link to government sites, academic papers, and industry leaders. Citation quality matters more than quantity.

- Update your About Us page with real team photos and contact information. This improves transparency — a key trust signal.

- Remove outdated or incorrect content. LLMs penalize sites that spread misinformation. Your content accuracy rate should be as close to 100% as possible.

- Add customer reviews, case studies, and before-and-after photos. These provide fact verification presence for service-based businesses.

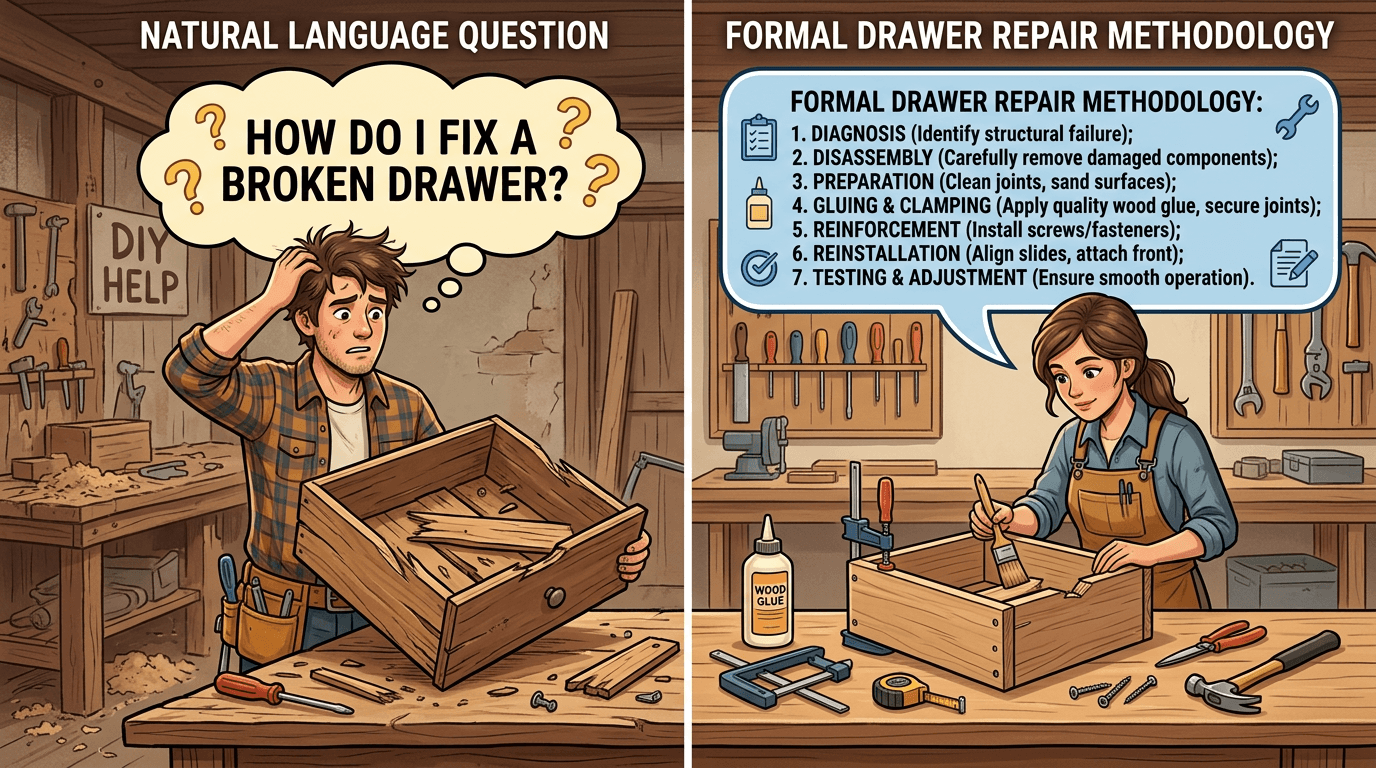

Optimizing for Conversational Queries

People ask LLMs as they talk to a friend. "How do I fix a broken drawer?" not "Drawer repair methodology." Your content must match this conversational style. The same applies to voice search through Siri, Alexa, and Google Assistant.

Optimization Tips for Conversational Queries

- Include full question phrases in your headings. "How to fix a broken drawer" as an H2.

- Write answer paragraphs that sound like one person explaining to another. Use "you" and "your" to make it personal.

- Add a "People Also Ask" section manually on your page. List 5 to 10 related questions and answer each one briefly.

- Use natural transitions. "Now that you know how to fix the drawer, let us talk about preventing future damage."

- Avoid overly academic or legalistic language. Write at an 8th-grade reading level unless your audience expects advanced vocabulary.

Content Formatting That LLMs Prefer

Content formatting is not just for humans. LLMs use formatting to understand what is important. The structure format you choose directly impacts how well the model extracts information.

Preferred Formats for LLM Extraction

- Lists for multiple items of equal importance. LLMs extract each list item separately.

- Tables for comparing data. LLMs read tables row by row. Use a clear header row and consistent data types.

- Definitions for key terms. Write "Definition: Tokenization is…" on its own line.

- Q&A blocks for direct answers. Write the question bolded or as a heading. Write the answer in normal text underneath.

- Code blocks for technical instructions. LLMs preserve code blocks exactly as written.

- Alt text for charts and graphs. LLMs cannot see images — the alt text provides the data context.

External References and Citations in AI Trust

LLMs pay attention to which external sources you cite. If you link to high-authority websites, that trust transfers partially to your content. Citations are one of the strongest ranking signals for AI systems.

How to Use External References Correctly

- Link to at least 3 to 5 external sources per long-form article. Choose .gov, .edu, or major news sites.

- Do not link to low-quality directories, spammy forums, or irrelevant sources.

- Cite specific statistics with their source. "According to a 2024 study from Stanford University…" is better than "Studies show…"

- Link to contradictory viewpoints and explain why your position is correct. This shows confidence and thoroughness.

- Avoid linking only to your own content. External links demonstrate that you engage with the wider community.

Updating Content for Freshness and Continuous Relevance

Freshness is not just for news sites. LLMs track when your content was last updated and prioritize recent information for time-sensitive queries. Managing this is part of a healthy content lifecycle.

Freshness Strategy

- Set a calendar reminder to review every page on your site every 6 months.

- Update statistics to the most recent year available. Change "2022 data" to "2024 data."

- Add a "Last updated" date at the top of each page. LLMs can read this date directly.

- Remove outdated examples. A social media marketing page from 2019 should not still focus on Instagram as a photo-only app.

- When you update a page, change at least 20% of the content. Minor typo fixes do not count as freshness updates.

Common Mistakes That Prevent LLM Ranking

Avoid these errors if you want LLMs to notice your content.

Tools for LLM Optimization and Content Analysis

Use these tools to analyze and improve your LLM visibility.

| Tool | Purpose | Cost |

|---|---|---|

| Frase.io | Content optimization for AI answers | Paid |

| Surfer SEO | Entity and NLP analysis | Paid |

| Google NLP API | Entity and sentiment detection | Free tier |

| SEMrush | Keyword and entity recommendations | Paid |

| RankMath | Schema markup and internal linking | Free and paid |

| ChatGPT | Test your own content visibility | Free |

| Perplexity AI | See which sources appear for your queries | Free |

| Google Search Console | Monitor indexing and crawl behavior | Free |

| Google Analytics | Track engagement signals | Free |

Case Studies: Websites Ranking Well in AI Search

35% of Health-Related ChatGPT Answers Cite Healthline

According to a 2024 analysis, Healthline appears in approximately 35% of health-related ChatGPT answers. Their strategy includes detailed author bios with medical credentials, clear Q&A formatting, and regular freshness updates every 3 months. Their topical authority in health is unmatched.

Dominates Personal Finance in Perplexity AI and Google Gemini

NerdWallet's strategy centers on comparison tables. LLMs love extracting data from well-formatted tables. They also use FAQ schema on every money-related page. Their content-query alignment is excellent with exact intent matching throughout.

300% AI Citation Increase in 6 Months

A family-owned HVAC blog increased AI citations by 300% in 6 months. They added entity links between furnace, air conditioner, heat pump, and thermostat pages. They also added HowTo schema for every repair guide. Their information gain was high from unique local knowledge that national sites lacked.

AI Search Engines: Platform-by-Platform Breakdown

| Platform | Retrieval Method | Key Preference | Freshness |

|---|---|---|---|

| ChatGPT (OpenAI) | RAG with Bing search | Conversational, well-structured, clear entities | Varies by version |

| Google Gemini | Hybrid — Google index | E-E-A-T, freshness, good Google rankings | Frequently updated |

| Perplexity AI | Live web search | Academic citations, external references, footnotes | Real-time |

| Microsoft Copilot | Bing integration | Recent dates, Microsoft 365 data | Real-time from Bing |

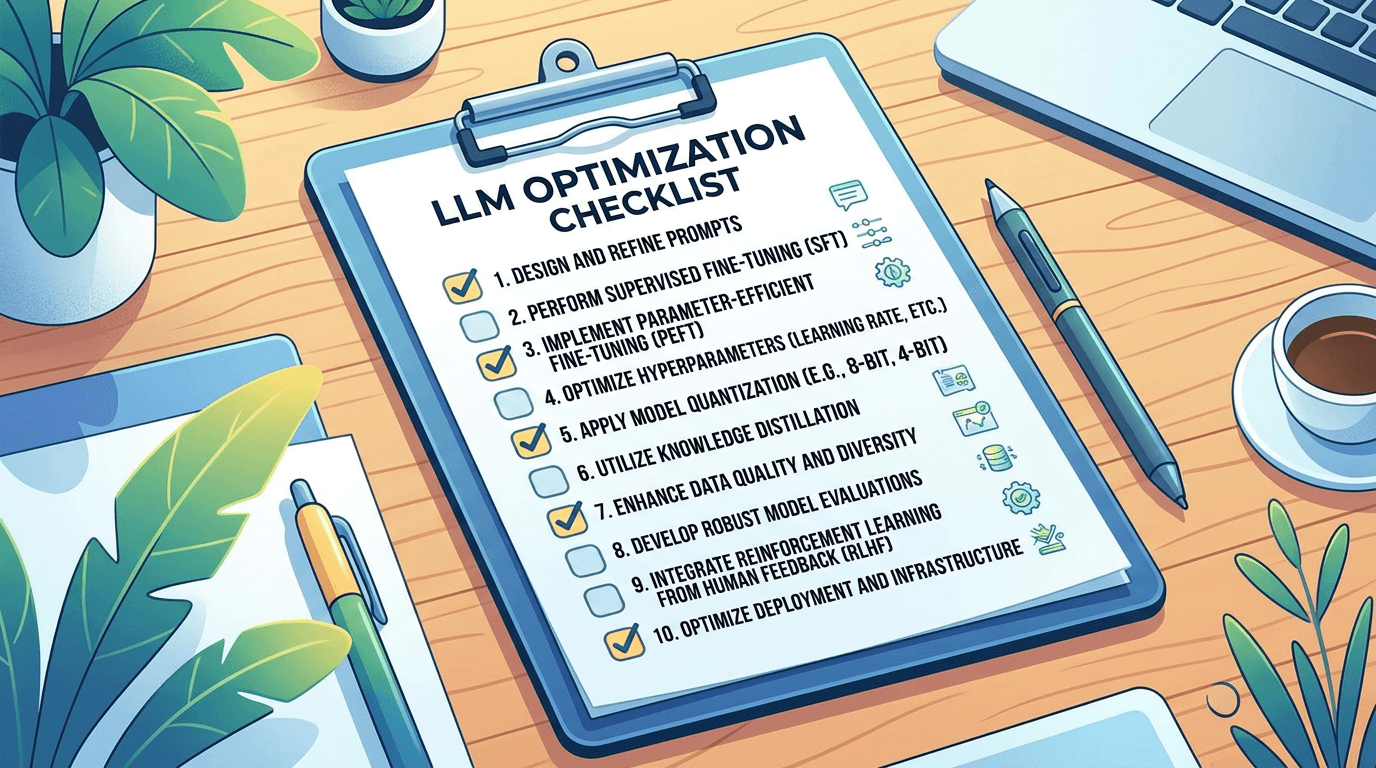

LLM Optimization Checklist

Use this checklist before publishing any new page or after auditing existing content.

Content Audit Framework for AI Optimization

Run this audit on your existing content every 6 months. This is a structured review that includes content gap analysis and topical map completeness checks.

- Step 1: List your top 50 pages by traffic from Google Analytics. Check engagement rate metrics like time on page and scroll depth.

- Step 2: For each page, ask: Does this page directly answer the question in its title? If no, rewrite the first paragraph to answer immediately.

- Step 3: Check entity density. Use Google NLP API. If important entities are missing, add a definitions section.

- Step 4: Review internal links. Every page should link to at least 3 other related pages. Measure internal link strength.

- Step 5: Update all statistics to the current year.

- Step 6: Add missing schema. Verify structured data quality using Google's Rich Results Test.

- Step 7: Test your page manually. Ask ChatGPT, "What is [your page topic]?" See if your content appears. If not, add clearer answers and more entities.

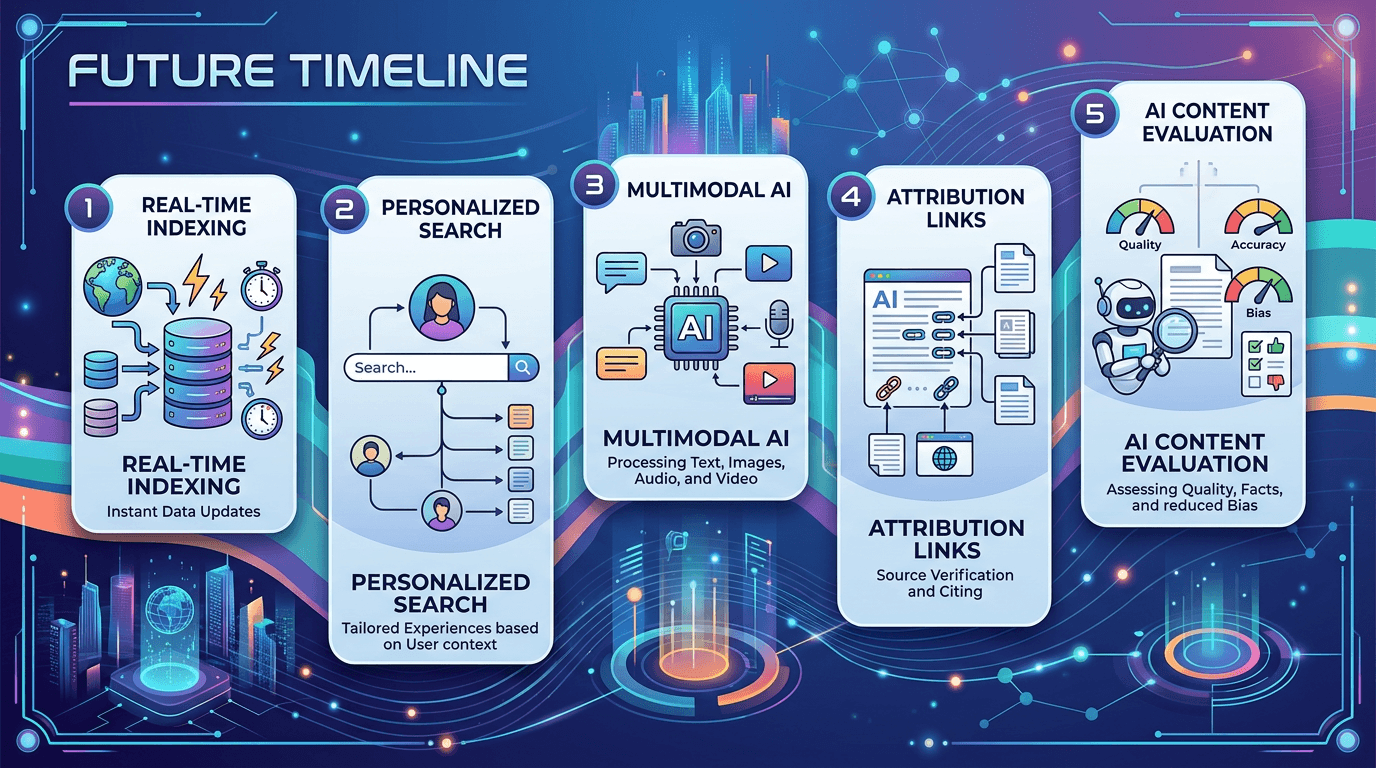

Future Trends in AI Search and LLM Ranking

AI search is changing fast. Here is what is coming and how to prepare for it.

Real-Time Indexing

LLMs will soon crawl the web continuously instead of using stale training data. This reduces issues with knowledge cutoff dates for all platforms.

Personalized Search

LLMs will tailor answers based on user location, history, and preferences. Content targeting specific audience segments will gain an advantage.

Multimodal AI

LLMs will accept images, voice, and video as queries. Having alt text, transcripts, and descriptive captions ready is the preparation step needed now.

Attribution Links

More LLMs will show direct citations and links to source websites, driving actual referral traffic. AI citation probability will become a key metric.

Voice Search Growth

As AI assistants proliferate in homes and cars, voice search compatibility will become even more important. Write for spoken answers now.

Glossary of Key LLM Terms

| Term | Simple Meaning |

|---|---|

| Large Language Models (LLMs) | AI models trained on vast amounts of text to understand and generate human-like language |

| Generative AI | AI that creates new content — text, images, or code — based on patterns learned from training data |

| Retrieval-Augmented Generation (RAG) | A method where an LLM searches for relevant information before generating an answer |

| Transformer Architecture | The neural network design that powers most modern LLMs, using attention mechanisms |

| Tokenization | The process of breaking text into smaller pieces called tokens for processing |

| Context Window | The amount of text an LLM can consider at one time — typically 8,000 to 128,000 tokens |

| Prompt Engineering | The practice of designing effective inputs to get desired outputs from LLMs |

| Answer Engine Optimization (AEO) | Optimizing content to directly provide answers in AI-generated responses |

| GEO (Generative Engine Optimization) | The discipline of optimizing content to be cited in LLM-generated answers |

| E-E-A-T | Experience, Expertise, Authoritativeness, Trustworthiness — Google's quality framework also used by LLMs |

| Knowledge Graph | A database that stores interconnected entities and their relationships |

| Hallucination Rate | The frequency with which an LLM generates factually incorrect information — target is as low as possible |

| Extractability | How easily an AI can pull a direct answer from your content — target is high with clear answer blocks |

Frequently Asked Questions

Most websites see changes within 4 to 8 weeks after major content updates. LLMs re-crawl periodically but not instantly. Check Google Search Console for indexing activity to track progress. Real-time monitoring helps you see when new content is being discovered by AI retrieval systems.

Yes, indirectly. Backlinks improve your brand authority and crawl frequency. LLMs notice when major sites link to you as a form of author authority. High-quality relevant references from trusted sources remain valuable signals even in the AI search era.

Yes. Niche sites with focused topical authority and strong content depth often outrank generalist big brands for specific questions. Information gain is often higher on smaller, specialized sites because they contain unique local or expert knowledge that large generic sites lack.

Only if you provide transcripts. LLMs cannot watch videos but can read video transcripts and captions. Multimodal capability is improving across platforms, but text remains the primary medium. Add full transcripts to every video you publish to make video content visible to AI retrieval systems.

Every 6 months for evergreen topics. Every 2 weeks for news or trending topics. When you update a page, change at least 20% of the content — minor typo fixes do not count as freshness updates. Always update the "last updated" date so LLMs can read it directly.

Traditional SEO focuses on getting clicks from search result pages. GEO (Generative Engine Optimization) focuses on getting your content cited or referenced inside AI-generated answers. SEO is about ranking in a list. GEO is about becoming the source that AI systems trust and quote when generating responses.

A 2024 study by SparkToro found that 62% of LLM citations came from content shared on at least 3 different platforms, compared to only 18% for content published on a single website. Submit your sitemap to Bing Webmaster Tools, share excerpts on LinkedIn and Medium, post on Reddit and Quora, and earn backlinks from news sites.

Let Webperts Help You Rank in LLMs and AI Search

Getting better results and higher rankings in LLMs comes down to clarity, structure, and entity-rich content. The Webperts team has developed proven methods and strategies to help websites like yours rank higher in LLMs and AI search results. Their comprehensive audit process includes content gap analysis and technical reviews of your structured data — identifying exactly what your content is missing: entity gaps, structural issues, schema errors, and freshness problems. Many website owners praise the Webperts team for their straightforward approach and measurable results. Let them help you turn your content into a trusted source for AI answers.

Submit Your Site for a Free Audit →Learn more about Webperts SEO and GEO services